Archive

Differentially Private GAN

Introduction - Propose a differentially private generative adversarial network - Uses the Wasserstein distance which is better than JS-divergence, i.e. WGAN Framework - GAN : minimax game between generator and discrim...

Introduction

- Propose a differentially private generative adversarial network

- Uses the Wasserstein distance which is better than JS-divergence, i.e. WGAN

Framework

- GAN : minimax game between generator and discriminator.

- Based on the Wasserstein GAN (Arjovsky et al., 2017) framework

How to achieve differential privacy during the learning algorithm?

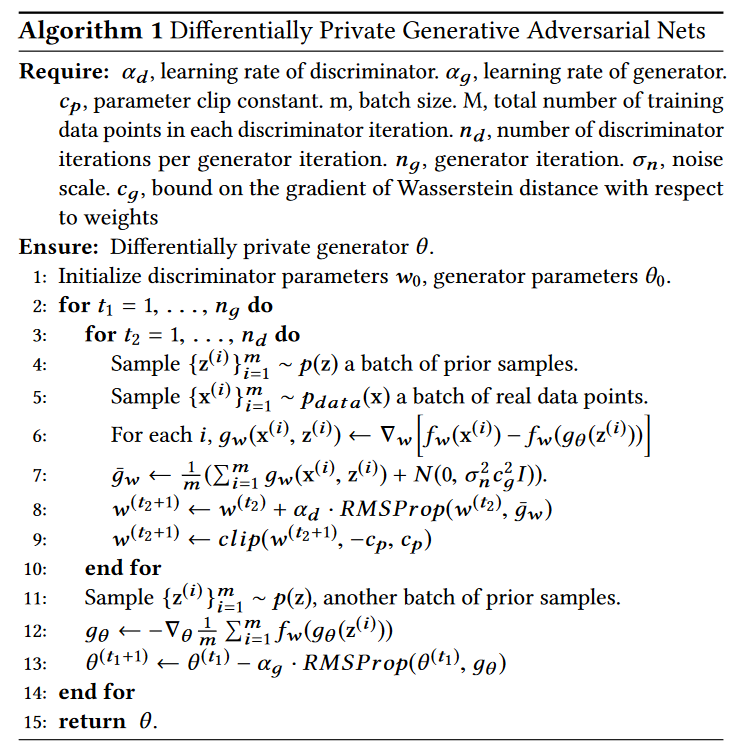

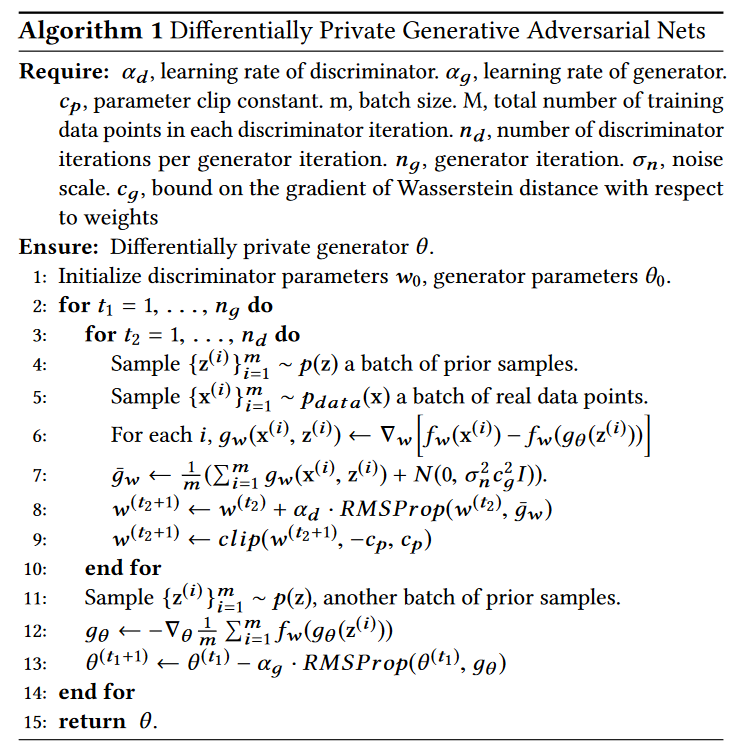

Algorithm

DPGAN Algorithm

DPGAN Algorithm

- At the 7th line of the code, Gaussian noise is added to the gradient of the Wasserstein distances.

- Line 9 : Weight Clipping (How about the gradient penalty term?)

Privacy Guaranty

- : Parameters of generator, defined through the discrimator parameters

- : Privacy budget (smaller budget guarantees higher level of privacy)

Consider the update procedure at the algorithm above, for a fixed .

Since the procedure above produces a new output with the dataset and an auxiliary input and noise structure, it can be regarded as an algorithm, and write as

Thus, we can define the privacy loss for as follows.

Definition

The privacy loss at is defined as

where are the neighboring datasets. Also, we can define the random variable of privacy loss as follows.

Log moment generating function

Moments accountant

See (Abadi et al., 2016).

Lemma

Under the condition of Algorithm 1, assume that the activation function of the discriminator has a bounded range and bounded derivatives everywhere: , and input space is compact so that . Then, for some constant .

Remark. ReLU, Softplus activation functions are unbounded, but guarantees the boundness since the compactness of input space affects on the compactness of the function values.

Lemma 2

Given the sampling probability , the number of discriminator iterations in each inner loop and privacy violation , for arbitrary the parameters of discriminator guarantee -privacy w.r.t. all the data points used in that outer loop if we choose:

Proof. See paper

Theorem

The Algorithm 1 learns a generator which guarantees -DP.

References

- Xie, L., Lin, K., Wang, S., Wang, F., & Zhou, J. (2018). Differentially Private Generative Adversarial Network (arXiv:1802.06739). arXiv. https://doi.org/10.48550/arXiv.1802.06739